This article is motivated by this VMTN thread posted by one of VMware users. I see that several users have reported this usability issue. In this post, I thought to share my understanding about this “empty EVC cluster” behavior along with simple workaround to fix this usability issue.

Update 03/20: Great news! Empty EVC cluster issue discussed in this post is fixed with latest vCenter Patches. With new vCenter patch, empty EVC cluster will NOT expose new cpuids by-default, rather it will expose cpu features based on the first host getting added into EVC cluster. For your learning, once you patch your vCenter server, I would recommend you try below script to understand the new empty EVC cluster behavior.

1. What is the issue?

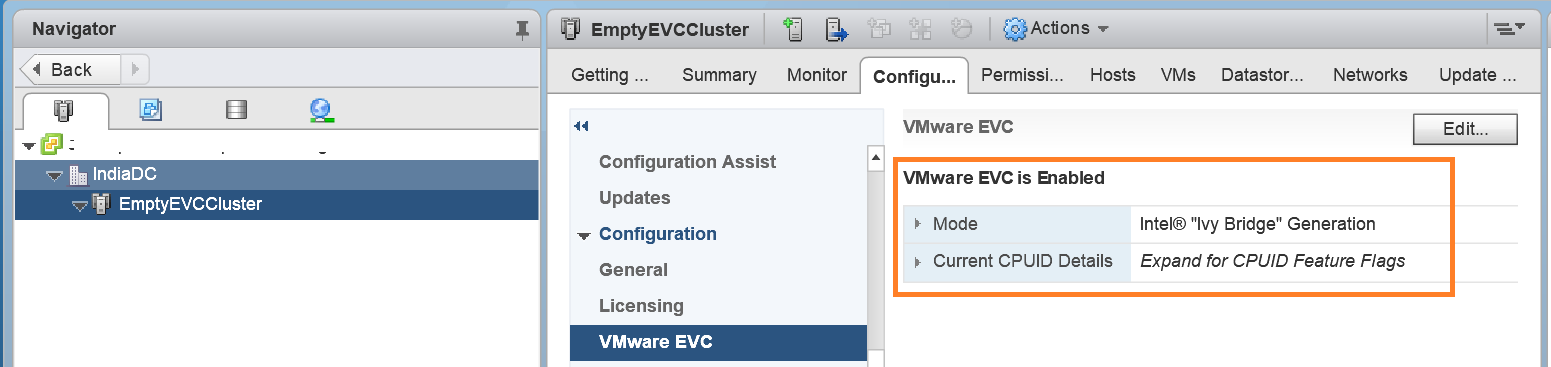

Users are NOT able to add a host into an empty EVC cluster after applying latest vCenter patch, which was released as part of Hypervisor-Assisted Guest Mitigation on 9th Jan, 2018. In order to explore this further, I created a empty EVC cluster on vCenter 6.5 U1e as shown below.

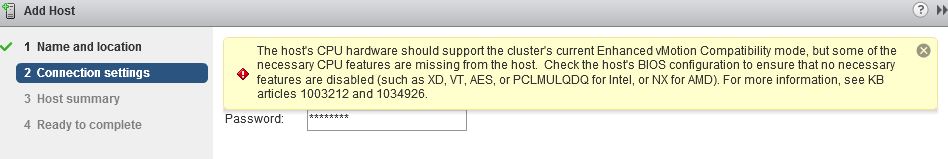

You can see, I have created empty EVC cluster, where EVC mode configured is “Intel-IvyBridge”. Now I tried adding a host, where corresponding ESXi patch is NOT applied and as posted on VMTN thread, I see below error on web client.

2. Why does it fail with this vCenter 6.5 U1e patch?

You might know that, as part of VMware patches released for Hypervisor-Assisted Guest Mitigation, there are three new cpuids got exposed i.e. cpuid.IBRS , cpuid.IBPB & cpuid.STIBP. Now we need to know whether these new cpuids are exposed by the “empty EVC cluster” that we just created but you might be wondering how to go about it? It is fairly simple to know it by using one of EVC API properties as posted in my tutorial post on EVC vSphere APIs. As part of section #4 of that tutorial i.e. “Exploring EVC Cluster state”, I had written a pyVmomi script to get the EVC cluster state i.e. getEVCClusterState.py. I little modified this script to know whether new cpuids are exposed by cluster or not. Below is the code snippet.

[python]

#Cluster object for empty EVC cluster

cluster = get_obj(content,[vim.ClusterComputeResource],cluster_name)

#EVCManager which exposes EVC APIs

evc_cluster_manager=cluster.EvcManager()

evc_state=evc_cluster_manager.evcState

current_evcmode_key= evc_state.currentEVCModeKey

if(current_evcmode_key):

print "Current EVC Mode::"+current_evcmode_key

else:

print "EVC is NOT enabled on the cluster"

quit()

#getting feature capabilities available on cluster

feature_capabilities = evc_state.featureCapability

flag=False

for capability in feature_capabilities:

if(capability.key=="cpuid.STIBP" and capability.value=="1"):

print "Found new cpuid::"+ capability.key

flag=True

if(capability.key=="cpuid.IBPB" and capability.value=="1"):

print "Found new cpuid::"+ capability.key

flag=True

if(capability.key=="cpuid.IBRS" and capability.value=="1"):

print "Found new cpuid::"+ capability.key

flag=True

if(not flag):

print "No new cpubit found"

[/python]

Once I executed above script on “empty EVC cluster” and I see output as follows

Output:

vmware@localhost:~$ python emptyEVCCluster.py

Current EVC Mode::intel-ivybridge

Found new cpuid::cpuid.IBPB

Found new cpuid::cpuid.IBRS

Found new cpuid::cpuid.STIBP

vmware@localhost:~$

Output clearly shows that new cpuids are exposed on the “empty EVC cluster” that we created on latest vCenter patch. This concludes that when you try to add a non-patched host into this cluster, it will fail since that host does NOT support these new cpuids. On the other hand, if you have applied corresponding ESXi patch (which is pulled back at the moment), then you will not face this issue as new cpuids are available on that patched host. Now that you understood this behavior, let us take a look at the simple workaround to fix this issue.

3. What is the workaround?

I understand, this is a problem from usability perspective but good news is that we have very simple workaround available i.e. Instead of upfront enabling EVC on the empty cluster, you can create non-EVC cluster first , add all possible hosts into it and finally enable EVC as per available EVC mode. If you ask me, it is always good idea to create non-EVC cluster and only after adding all possible hosts, we should finally enable EVC on cluster.

I would also recommend you to read “vMotion and EVC Information” section from VMware KB to understand EVC and vMotion behavior post applying these patches.

4. Some useful resources on EVC

1. Part-1: Managing EVC using pyVmomi

2. Part 2: Managing EVC using pyVmomi

3. Tutorial on getting started pyVmomi

I hope you enjoyed this post, let me know if you have any questions/doubts.

Vikas Shitole is a Staff engineer 2 at VMware (by Broadcom) India R&D. He currently contributes to core VMware products such as vSphere, vSphere with Tanzu and partly VCF & VMware cloud on AWS. He is an AI and Kubernetes enthusiast. He is passionate about helping VMware customers & enjoys exploring automation opportunities around core VMware technologies. He has been a vExpert since last 10 years (2014-23) in row for his significant contributions to the VMware communities. He is author of 2 VMware flings & holds multiple technology certifications. He is one of the lead contributors to VMware API Sample Exchange with more than 35000+ downloads for his API scripts. He has been speaker at International conferences such as VMworld Europe, VMworld USA & was designated VMworld 2018 blogger as well. He was the lead technical reviewer of the two books “vSphere design” and “VMware virtual SAN essentials” by packt publishing.

In addition, he is passionate cricketer, enjoys bicycle riding, learning about fitness/nutrition and one day aspire to be an Ironman 70.3

One thought on “Simple workaround: Adding a host to an empty EVC cluster fails on latest vCenter patch”

Comments are closed.